When your sales team is overwhelmed with leads but struggling to identify high-quality prospects, an AI-powered engagement scoring system can be a game-changer. Unlike traditional methods that rely on rigid rules, AI uses data-driven insights to prioritize leads based on their fit and intent, boosting accuracy to 80–95%. This approach can increase SQL conversion rates by 20–40% and shorten sales cycles by 15–25%.

Here’s how to build one:

- Define Key Signals: Focus on explicit data (e.g., job title, company size) and implicit behaviors (e.g., demo requests, pricing page visits). Combine these for better accuracy.

- Use Weighted Scoring: Assign points based on actions and attributes that align with your ideal customer profile. Include negative scoring for disqualifiers.

- Organize Data: Connect tools like CRMs, analytics platforms, and enrichment APIs to create a unified data source. Ensure data quality with validation and deduplication.

- Develop the AI Model: Train your model using historical data and structured rules. Use prompts to guide predictions and refine results with feedback loops.

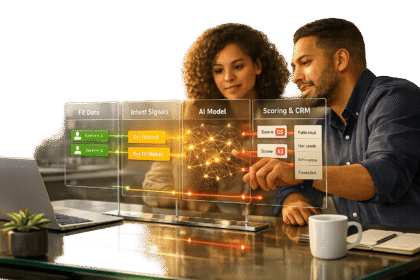

- Automate and Integrate: Link the AI system to your CRM for real-time scoring and lead routing. Use workflows to notify sales teams instantly about high-priority leads.

5-Step AI Engagement Scoring System Implementation Framework

Step 1: Define Your Engagement Signals and Scoring Framework

To build an effective AI scoring system, you need to identify the signals that truly matter. For example, a CEO downloading your pricing guide is a much stronger signal than someone casually reading a blog post. It’s also important to separate signals that indicate fit (the right type of customer) from those that show intent (their readiness to buy). For more practical tips, check out our free AI Acceleration Newsletter. Additionally, M Studio – part of M Accelerator – partners with founders to create AI-driven go-to-market strategies.

Now, let’s dive into the two main types of signals that shape your scoring framework.

Explicit vs. Implicit Signals

Explicit signals are the data points you gather directly from leads or through tools that enrich your data. These include details like job title, company size, industry, revenue, and geographic location. They help you evaluate whether a lead fits your Ideal Customer Profile (ICP). For instance, if you sell enterprise software, a lead from a 500-person SaaS company in your target industry would score higher than a freelancer using a personal email address.

Implicit signals, on the other hand, are behavioral indicators that show a lead’s buying intent. These include actions like visiting your website, clicking on emails, downloading content, viewing your pricing page, or requesting a demo. For example, a lead who visits your pricing page three times in a week is showing much stronger intent than someone who simply opened a newsletter.

Focusing only on explicit signals might help you identify a good fit but could miss the right timing, leading to premature outreach. Meanwhile, relying solely on implicit signals could result in chasing low-fit leads. By combining both types of data, AI can boost scoring accuracy from 50–70% to an impressive 80–95%.

How to Build a Weighted Scoring System

Using a 0–100 scale is a good starting point. It provides enough detail to differentiate leads without making the system overly complex. Assign points based on how closely each signal aligns with closed deals in your business. For instance, high-intent actions like demo requests should get 30–50 points, while lower-intent actions like blog views might only earn 1–5 points.

Here’s an example of a weighted scoring framework:

| Signal Type | Example Attribute | Point Value | Rationale |

|---|---|---|---|

| Explicit | Target Industry (e.g., SaaS) | +30 | Strong indicator of deal potential |

| Explicit | Company Size (200+ employees) | +30 | Suggests enterprise-level budget |

| Implicit | Demo Request | +20 | Clear sign of purchase intent |

| Implicit | Pricing Page Visit (2+ times) | +15 | Shows active evaluation |

| Implicit | Content Download (Whitepaper) | +5 | Indicates early-stage interest |

| Negative | Competitor Domain | -50 | Disqualifies lead immediately |

| Negative | Inactivity (30 days) | -10 | Reduces priority for waning interest |

Negative scoring is equally important. Subtract 10–50 points for disqualifiers like competitor email domains, student addresses, or irrelevant job titles. This ensures your sales team focuses on leads with real potential.

To keep your scores accurate, apply decay rules. For example, if a lead has been inactive for 30 days, reduce their score by 5–10 points per month. This prevents outdated activity from inflating a lead’s current readiness and ensures your scoring reflects their most recent intent.

sbb-itb-32a2de3

Step 2: Collect and Structure Your Data Sources

Once you’ve identified your engagement signals, the next step is gathering and organizing the data that powers them. The success of any AI model depends heavily on the quality of the data it processes. Start by connecting tools that provide the data you need. For instance, CRM platforms like Salesforce and HubSpot can supply historical sales data and lead activity. Website analytics tools such as Google Analytics and Segment are great for tracking behavioral signals, like visits to pricing pages or content downloads. If you’re in the B2B SaaS space, product usage data from your internal systems – such as the number of team members a user invites during a trial – can be one of your most valuable proprietary signals. Once data starts flowing in, ensure it’s well-organized to integrate seamlessly with your AI system.

To fill in the gaps, use third-party enrichment APIs like Brandfetch or Clearbit. These tools can add firmographic details, such as company size, industry, and funding status, based on email domains. Tools like Intercom or support ticket systems can reveal how leads engage with your team after initial contact. If you’re running paid campaigns, linking Google Ads and Meta APIs can help track offline conversions and adjust bids based on lead quality rather than just clicks.

Before diving into model development, conduct a data audit. Ideally, you’ll need at least 12 months of historical data on leads and opportunities to train an AI model effectively. For example, Salesforce Einstein requires at least 1,000 converted leads to function properly. If your CRM data is in good shape, building a custom scoring model can take as little as three weeks.

How to Integrate Data Sources

To get started, connect your main platforms through webhooks and native integrations. Webhooks enable real-time data flows – for example, sending leads from tools like Typeform or Webflow directly into your CRM. This ensures that leads can be scored almost instantly, often within three seconds of form submission, with notifications sent to tools like Slack. Use a unified identifier (like an email address or contact ID) across all platforms to enable accurate reporting and attribution.

Data quality is critical. Use tools like Google reCAPTCHA and ZeroBounce to validate form submissions and set up deduplication rules to avoid cluttering your CRM with redundant records. You can also use inline transformation scripts to extract company domains from email addresses (e.g., converting "john@acme.com" into "acme.com") so that enrichment APIs can automatically append firmographic data. Once your integrations are live and your data is validated, you can focus on structuring the data for maximum AI performance.

How to Structure Data for AI Models

AI models require structured data to work effectively. Start by organizing your data into JSON objects with key fields like company size, industry, founding year, and recent engagement activities. For Python-based workflows using tools like LightGBM, format your data into clear tabular columns for each signal. This allows the model to differentiate between early-stage behaviors (like reading blog posts) and late-stage intent signals (like requesting a demo).

Divide your data into four main categories: fit, intent, product usage, and integrity. Fit data comes from CRMs and enrichment tools, including details like industry, company size, and job title. Intent data tracks engagement through platforms like Google Analytics, monitoring actions such as pricing page visits or email clicks. Product usage data, stored in your internal databases, focuses on how leads interact with your product during trials. Integrity data from security tools identifies and filters out bots or suspicious activity. Structuring your data in this way helps the AI model weigh each category appropriately, providing more accurate lead scores based on both who the lead is and what they’re doing.

Properly organizing your data not only simplifies the model training process but also improves the accuracy of your lead scoring. At M Studio / M Accelerator, this approach has helped us structure data efficiently, driving measurable revenue growth for our clients.

Step 3: Build Your AI-Powered Scoring Model

Once your data is structured, it’s time to create the AI model that will analyze engagement signals and generate lead scores. Modern tools like OpenAI’s GPT-4o-mini and Claude are excellent at processing complex patterns across various data points simultaneously. Unlike traditional rule-based systems that simply tally points, AI models estimate the probability of specific outcomes – like whether a lead will become a Sales Accepted Lead (SAL) or contribute to a qualified pipeline. To get the best results, train your model using 12–24 months of quality historical data. This allows it to recognize patterns throughout the entire journey, from the first interaction to a closed deal.

The best models use in-context learning, where you guide the AI with specific prompts to evaluate leads based on your business logic.

How to Design AI Prompts for Scoring

In-context learning involves defining the AI’s role and providing clear scoring rules. For instance, you might instruct the AI: "You are a B2B lead scoring analyst for a SaaS company." Then, provide structured scoring criteria in a JSON format, such as:

- "+30 points for Tech/SaaS companies"

- "+20 points for companies with 50–200 employees"

This ensures the model aligns with your Ideal Customer Profile (ICP) from the start. A sample output might look like this:

{ "score": 85, "summary": "High-intent enterprise lead", "top_drivers": ["Matched ICP", "Pricing page visit", "Demo request"] } This format is easy for CRM or automation tools to process. To maintain consistency, set the AI’s temperature to 0.3 – this reduces randomness, ensuring reliable results.

To build trust with sales teams, include explanations in your prompts. For example, ask the AI to justify each score. Sales reps are more likely to act on leads when they understand the reasoning behind the rating. As Ameya Deshmukh, VP of Marketing at EverWorker, puts it:

"If sales has to interpret the model output manually, you haven’t improved lead quality – you’ve just added another field."

By automatically generating insights like "Matched ICP + high pricing intent," the AI empowers sales teams to take immediate action. With clear prompts in place, you can now refine the scoring model by adding business-specific rules.

How to Add Business-Specific Rules

To make your scoring model even smarter, incorporate negative scoring to weed out unqualified leads. For example:

- Subtract 20 points for competitor email domains.

- Subtract 10 points for personal email addresses (e.g., Gmail, Yahoo).

- Subtract 25 points for job titles without purchasing authority.

This prevents sales teams from wasting time on leads that are unlikely to convert.

Another key tactic is score decay, which adjusts scores based on inactivity. For instance, reduce behavioral scores by 20% after 30 days of no engagement. This keeps your pipeline focused on active leads with genuine buying intent.

You can also use feature engineering to convert raw data into predictive insights. Instead of simply tracking "website visits", create variables like:

- "Frequency of visits in the past 7 days"

- "Engagement depth across product pages"

These features help the model distinguish between casual browsers and serious buyers.

Finally, implement continuous feedback loops by feeding sales outcomes back into the model. When deals close – whether won or lost – update the AI so it can adjust its internal weights and improve accuracy over time. This ongoing refinement builds on the structured data you’ve already prepared, ensuring the system evolves based on real-world results.

Companies using this approach often see an 8–12% improvement in accuracy month-over-month during the first quarter. For example, at M Studio / M Accelerator, this feedback loop methodology helped clients triple their MQL-to-customer conversion rates – from 1% to 3% – by uncovering patterns that traditional scoring systems completely missed.

Step 4: Automate Scoring and Integrate with Your CRM

Once your AI model is up and running, the next step is to connect it to your CRM for real-time responses. Why is this so important? Studies show that responding to leads within an hour makes companies seven times more likely to engage decision-makers. A response within just five minutes can be up to 100 times more effective than waiting 30 minutes. By automating CRM integration, you can act on AI insights instantly, turning them into revenue opportunities as they happen.

Curious about integrating scoring with CRM automation? Sign up for our free AI Acceleration Newsletter to get weekly tips on connecting AI to revenue growth.

Automation Tools and Workflows

Tools like N8N, Zapier, and Make make it easy to link your AI model with your CRM. They use webhooks to trigger workflows as soon as a lead interacts with your system – whether that’s submitting a form, visiting a pricing page, or downloading a resource. These workflows can enrich lead profiles using data enrichment APIs like Brandfetch or Clearbit, collecting details such as company size, funding stage, and industry. Once enriched, the AI scores the lead and prioritizes it for action.

At M Studio / M Accelerator, we’ve built workflows for over 500 founders using tools like N8N and GPT-4o-mini. For example, when a lead enters the system, the data is processed, enriched, and scored in seconds. High-value leads (75+ points) trigger instant Slack notifications and are routed to the right team member. This ensures no time is wasted on following up with the most promising prospects.

Real-time updates are critical. If a lead downloads a pricing guide or requests a demo, their score should immediately reflect this new activity. A bi-directional sync between your CRM and AI ensures that updates flow both ways. For instance, when a sales rep updates a lead’s status in the CRM, that information feeds back into the AI model, refining future predictions. Companies using this approach often see revenue increases of up to 20% because they’re acting on fresh intent.

For prioritization, you can create tiered SLAs (Service Level Agreements) based on lead scores. Here’s a framework to guide your setup:

| Lead Type | Score Range | First Contact SLA | Follow-up Cadence |

|---|---|---|---|

| Demo Request (Score 75+) | 75+ | < 5 minutes | Call + email same day |

| MQL – Pricing Page (50–74) | 50–74 | < 2 hours | Email on day 1, call on day 2 |

| MQL – Content Download (30–49) | 30–49 | < 4 hours | Personalized email referencing download |

| Score Threshold (<30) | < 30 | Next business day | SDR research + personalized outreach |

This prioritization ensures your team focuses on the highest-intent leads first. As Casey Patterson, Manager of Account-Based Marketing at Fivetran, explained:

"I think if I took Demandbase away from the sales team, I’d have an uprising on my hands."

- Casey Patterson, Fivetran

How to Test and Refine Your Integrations

Once your workflows are set up, thorough testing is essential to ensure everything runs smoothly. Start by testing edge cases. For example, create a "perfect-fit" lead (e.g., a high-intent enterprise prospect) and a "terrible-fit" lead (e.g., a personal email or competitor domain). Run these through your workflow to confirm that scoring, routing, and alerts work as expected. If a high-value lead doesn’t trigger immediate action or an unsuitable lead slips through, adjustments are needed.

Human-in-the-loop validation is another important step. Have experienced sales reps manually score a sample of leads and compare their assessments to the AI’s scores. Aim for at least 85% alignment. If there’s a significant mismatch, revisit your scoring criteria or refine the AI prompts. This process builds trust in the system and ensures it aligns with the team’s expertise.

Don’t forget about score decay to keep your focus on active leads. For example, you can reduce a lead’s score by 20% after 30 days of inactivity, 50% after 60 days, and reset it to zero after 90 days. Here’s a simple guide:

| Inactivity Period | Decay Action | Condition |

|---|---|---|

| 30 days | Reduce score by 20% | No email open, click, or site visit |

| 60 days | Reduce score by 50% | No activity in 60 days |

| 90 days | Reset score to 0 | Transition into a re-engagement sequence |

Finally, schedule monthly reviews to identify any low-confidence scores or misclassifications. Use this feedback to update your AI model and improve its accuracy. At M Accelerator, our GTM Engineering service specializes in building and refining these workflows, ensuring they deliver measurable results from day one.

Step 5: Monitor, Refine, and Scale Your System

Your AI scoring system isn’t something you can set up and leave to run indefinitely. Markets change, buyer behaviors shift, and strategies that worked last quarter might not hold up today. To keep your system effective, regular monitoring is a must. By building on the automated workflows and real-time CRM integrations discussed earlier, you can ensure your system stays accurate and adapts to changing conditions. For more tips and strategies to fine-tune your AI-powered engagement scoring system, check out our free AI Acceleration Newsletter here.

Skipping regular updates can lead to model degradation, making predictions less reliable. To prevent this, monitor key metrics, conduct monthly audits, and refine your system based on actual sales outcomes. With automated integrations in place, the next step is to focus on consistent tracking and improvement.

Key Metrics to Track

Start by looking at the conversion rate by score range. If leads scoring 80–100 points are converting at the same rate as those scoring 40–60, your model isn’t effectively distinguishing high-quality leads. Ideally, higher scores should consistently correlate with better outcomes.

Another critical metric is the Sales Acceptance Rate (SAR) – the percentage of leads your sales team deems qualified. If your SAR falls below 60%, your scoring criteria might be too lenient and need tightening.

Pipeline velocity is equally important. Use this formula to calculate it:

(qualified opportunities × win rate × average deal size) ÷ sales cycle length.

A well-optimized system should show high-scoring leads moving through the funnel faster and closing at higher rates. Additionally, track speed to contact, which measures how quickly you reach out to a lead after they hit a high-score threshold. Studies show that contacting a lead within five minutes can be 100 times more effective than waiting 30 minutes.

Lastly, monitor the win rate by score band. If leads scoring 75+ aren’t closing at significantly higher rates than mid-tier leads, you may need to adjust your scoring weights. Companies that align their Marketing Qualified Lead (MQL) definitions with actual sales results have reported a 23% reduction in sales cycle length and a 77% boost in lead-generation ROI. These metrics provide the foundation for keeping your AI scoring system sharp and effective.

How to Improve Your System Over Time

Conducting monthly audits is critical. Analyze outliers – like low-scoring leads that convert or high-scoring leads that don’t – and adjust point values quarterly. Use A/B testing to validate any changes. For instance, if a lead with a personal email domain (usually a negative indicator) converts into a valuable deal, it might be time to revisit your disqualification rules.

Plan for quarterly recalibration by tweaking one variable at a time. For example, increasing the weight for visits to your pricing page can help you measure its impact more effectively. At M Accelerator, our Elite Founders program includes weekly sessions dedicated to these kinds of refinements, ensuring your system evolves alongside your business needs.

Don’t forget to review your score decay settings. Adjust parameters like reducing scores by 20% after 30 days or 50% after 60 days to keep your pipeline fresh and avoid wasting resources on stale leads. Finally, schedule regular sales and marketing alignment sessions to review leads at different score levels. When both teams agree on what qualifies as "sales-ready", you can eliminate inefficiencies and focus on leads with real potential.

Conclusion: From Manual Scoring to AI-Powered Efficiency

Transitioning from manual lead scoring to an AI-driven approach reshapes how your sales team operates. By following the framework outlined here, your new system moves beyond outdated "if-then" rules or sifting through endless leads. Instead, you’re equipped with a dynamic model that evaluates thousands of behavioral signals to calculate the likelihood of conversion. This model works with millisecond latency and improves its accuracy by 8–12% every month, thanks to continuous feedback loops. For more insights on using AI to sharpen engagement scoring and boost sales efficiency, subscribe to our AI Acceleration Newsletter for weekly tips and strategies.

The impact is immediate. Companies adopting AI-powered lead scoring report up to a 77% increase in lead-generation ROI, with SQL conversion rates climbing by 20–40%. Sales cycles shrink by an average of 23%, freeing up your team to focus on closing deals rather than chasing cold leads.

"The biggest ROI isn’t just in the hot leads identified; it’s in the 95% of leads you can safely ignore, saving countless hours of SDR prospecting and AE follow-up." – BizAI

At M Studio, we’ve helped over 500 founders implement AI systems that have collectively raised more than $75M in funding. Our hands-on approach allows us to deploy live automations tailored to your needs. Using the strategies covered in this guide, we’ve helped clients cut sales cycles by 50% and boost conversion rates by 40%.

If you’re ready to upgrade to an AI-powered revenue engine, check out our Elite Founders program. This includes weekly implementation sessions, direct Slack support, pre-built templates, and algorithms designed to work without requiring technical expertise. Together, we’ll create a scalable system that prioritizes high-value leads, sends real-time alerts, and continuously adapts to your sales data.

FAQs

What’s the minimum data I need to start AI lead scoring?

To get started with AI lead scoring, you’ll usually need a minimum of 1,000 historical leads with clear outcomes – like whether they converted or were disqualified. Additionally, some platforms suggest having roughly 40 qualified leads and 40 disqualified leads that were created and closed within a certain timeframe. This helps ensure the AI system has enough data to learn effectively and provide reliable scoring results.

How do I choose weights and decay rules without overfitting?

When working with an AI-powered engagement scoring system, it’s important to avoid overfitting when setting weights and decay rules. The key is to strike a balance and rely on continuous feedback. Make sure to retrain the model regularly using real-world outcomes, such as actual conversions, to keep it aligned with current trends and behaviors.

Additionally, establish clear decay criteria. For example, you could lower engagement scores for leads that have been inactive for a specific period. This approach helps the system stay dynamic, steer clear of static, outdated rules, and maintain its accuracy in prioritizing leads effectively.

How can I validate AI scores so sales teams trust them?

To build trust in AI-generated scores among sales teams, it’s essential to create a feedback loop where real sales outcomes are used to refine the scoring model over time. Establish clear thresholds that define what makes a lead "sales-ready", and revisit these thresholds regularly using performance data to keep them relevant.

Integrating automated alerts for significant changes in lead scores can also help sales teams stay informed and act promptly. By continuously improving the model with insights from actual outcomes, you’ll ensure the scores align closely with the quality of leads, boosting both credibility and confidence in the system.